Diffraction

Diffraction, something most photographers have heard about but not many know what it really means.

To most people it is a phenomenon they do not have to worry about but macro photographers know that it is one of the main aspects affecting final image quality.

In traditional photography and/or whe using wide apertures diffraction does not affect us at all, in fact what limits quality at wide apertures is the quality of the lens it self

However as we start to close the aperture ring diffraction starts to play with us. On a APS-C camera at f32 it does not really matter if we use a 2000€ prime or a crappy kit zoom lens; both images will have the same quality.

Some owner's of Canon's MP-E 65mm that do high magnification work may say " my MP-E at 4X and f11 is a lemon" and this is because effective aperture goes up to f55. Effective aperture is diffraction's closest friend.

But small apertures are not responsible for diffraction on their own (medium format users use small apertures without worries) but also has to do with pixel density.

Normally small sensors have smaller pixels and higher pixel density ( have you ever heard about the mpx race?) I am positive some 12mpx compact cameras would have better image quality with optimized 3/5 mpx sensors.

I am not going to go too deep into difraction theory as there are already excellent articles online.

In english: Cambridgeincolour In Spanish: Akvis

In a few words: Diffraction is a phenomenon of physics that consists in the deviation of the light when it meets an obstacle (the aperture ring). When the lens is wide open light rays get into the camera freely but as you close the iris these light rays distort and bend lowering the resolution of the sensor.

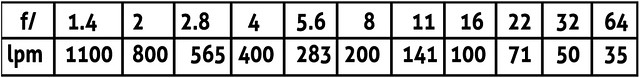

Theoretical resolution limits are measured in line pairs per mm and are:

difraccion 01

Because of the moire effect, cameras have an Anti Alias filter; because bayer sensors use a pixel per primary colour, light can fill 2-3 pixels before diffraction starts to affect the image.

There is a company that removes those AA filters, here you can see some examples: HOTROD

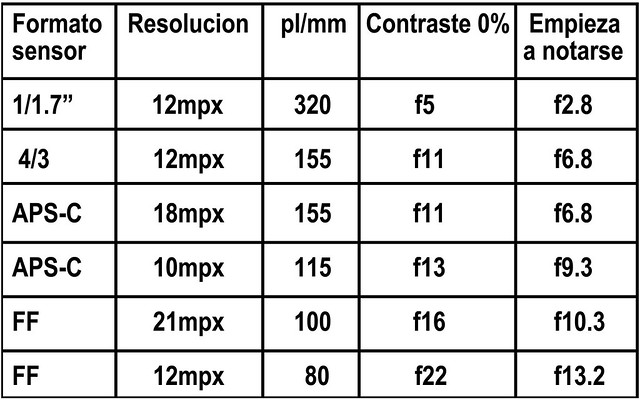

Each line pair needs two pixels (dark and bright), theoretical limits for some cameras are:

difraccion 02

We can see that even in high end compacts, diffraction starts to affect image quality as soon as you turn them on; 4/3 cameras have been catched by Canon in Pixel density and new Sony sensors have gone a way further, reaching 24mpx

We can also see that the EOS 5D classic sensor's good reputation has something to do with its low pixel count. First time I saw a 5D shot at 100% on the screen I was astonished. In my opinion the pixel density of the EOS 5D mkII is already to high, at least for extreme macro work. I find myself using SRaw1 and 2 quite often; as the normal RAW on high magnification work (over 20X) has no advantages over these SRAW modes.

You also have to bear in mind that these numbers are theoretical maximums and our results will be bellow these numbers most of the time. Technology of sensor is always improving and elements like gapless microlenses can improve final resolution whereas things like bayer array and AA filters can lower it.

No matter how much sensors evolve these numbers give the maximum resolution possible, there is no human technology capable of avoiding diffraction (however, it is true that there is some loss of detail due to flaws in actual sensor technology).

Here at The digital picture: you can see how diffraction starts to affect image quality on a EOS 7D at f5.6, at f8 there is some resolution loss.

So, when you start focus stacking try to keep within values were diffracion will not affect your results too much, this starts to be difficult as magnification goes up.